On the other hand, the notation we will use works well for illustrating the similarities between results for random matrices and the corresponding results in the one-dimensional case.

In this section, that convention leads to notation that is a bit nonstandard, since the objects that we will be dealing with are vectors and matrices. Sounds like a bad idea, as your profit would be sacrificed.

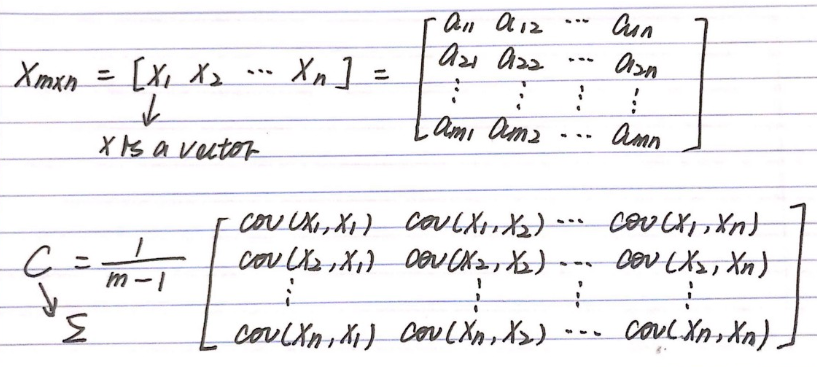

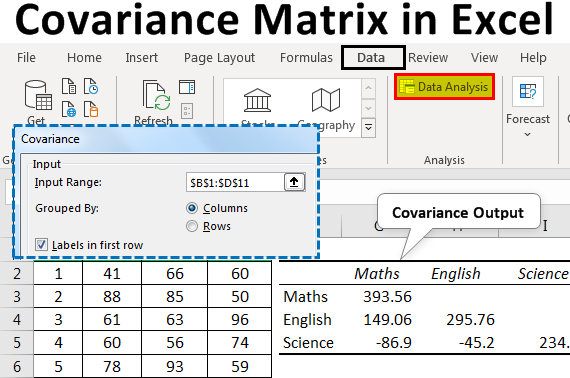

What will you do then Continue to pay them as usual. Some of them are doing same tasks everyday and therefore redundant. We will follow our usual convention of denoting random variables by upper case letters and nonrandom variables and constants by lower case letters. Answer (1 of 4): You have a fleet of workers performing some tasks under you. Also we assume that expected values of real-valued random variables that we reference exist as real numbers, although extensions to cases where expected values are \(\infty\) or \(-\infty\) are straightforward, as long as we avoid the dreaded indeterminate form \(\infty - \infty\). We assume that the various indices \( m, \, n, p, k \) that occur in this section are positive integers. In this approach, the data will determine how much shrinkage is required. This section requires some prerequisite knowledge of linear algebra. covariance matrix and then to shrink this estimate toward a parsimonious, structured form of the matrix. These topics are somewhat specialized, but are particularly important in multivariate statistical models and for the multivariate normal distribution. Along the diagonal we have the variances (square of the volatilities) of the assets and off diagonal elements are covariances (the product of two volatilities times the correlation). The main purpose of this section is a discussion of expected value and covariance for random matrices and vectors. Covariance matrix is also a symmetrical matrix in the sense that the lower half of the matrix (below the diagonal) is a mirror image of the upper half of the matrix. # Covariance matrix of the uncorrelated dataĪrray(,Īn interesting use of the covariance matrix is in the Mahalanobis distance, which is used when measuring multivariate distances with covariance.8. # Transform data with inverse transformation matrix T^-1 # Calculate transformation matrix from eigen decomposition The random walk has been studied extensively by scientists from various disciplines.

#COVARIANCE MATRIX SERIES#

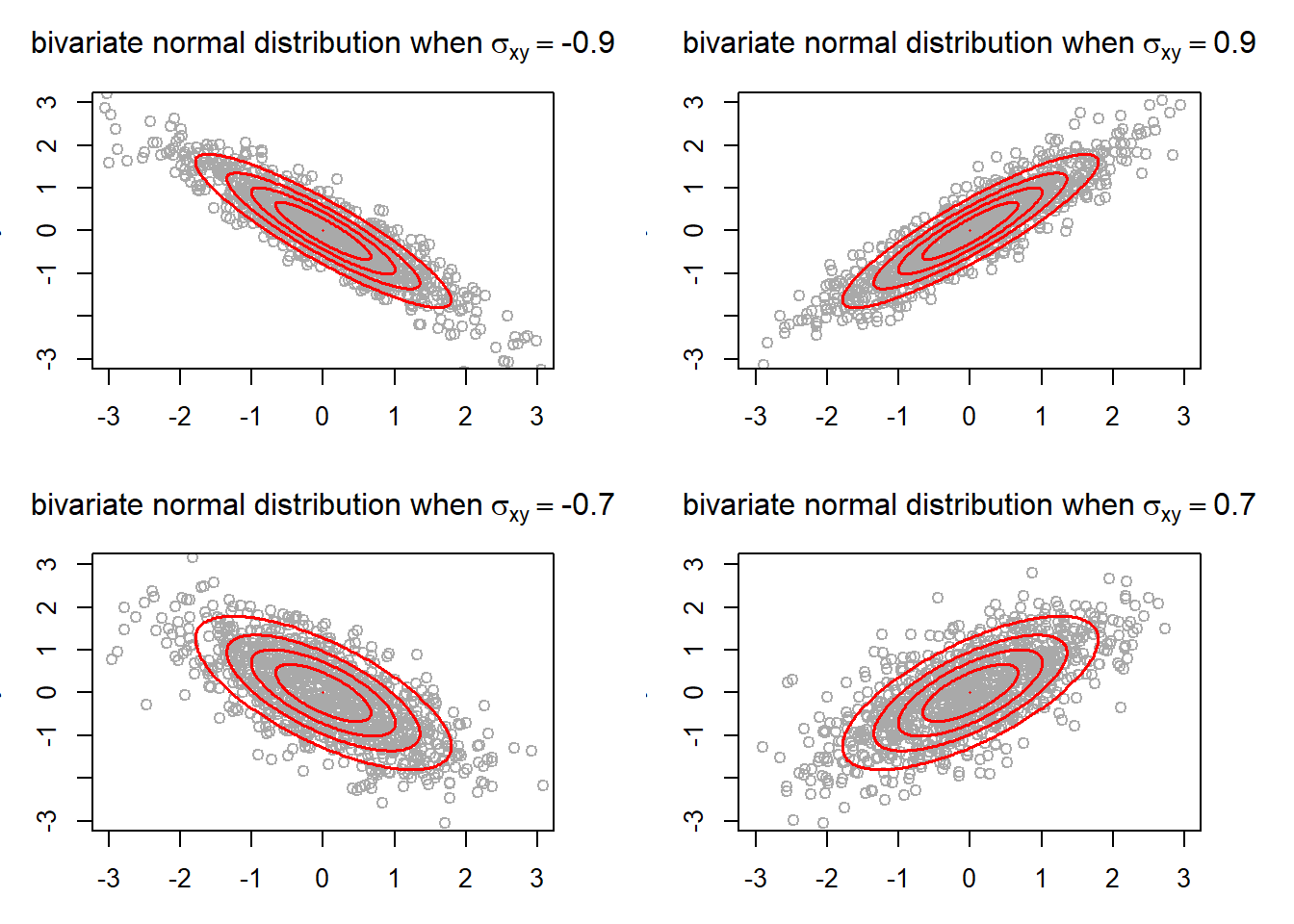

We can now get from the covariance the transformation matrix \(T\) and we can use the inverse of \(T\) to remove correlation (whiten) the data. Last Updated on Wed, Covariance Matrix An important and special ARMA series that merits discussion is the random walk. By multiplying \(\sigma\) with 3 we cover approximately \(99.7\%\) of the points according to the three sigma rule if we would draw an ellipse with the two basis vectors and count the points inside the ellipse. Variance measures the variation of a single random variable (like the height of a person in a population), whereas covariance is a measure of how much two random variables vary together (like the height of a person and the weight of a person in a population). Introductionīefore we get started, we shall take a quick look at the difference between covariance and variance. We will describe the geometric relationship of the covariance matrix with the use of linear transformations and eigendecomposition. This article is showing a geometric and intuitive explanation of the covariance matrix and the way it describes the shape of a data set.